10 Mar AI Is Not One Market: The Four Tiers of Intelligence

Founders, we keep talking about AI as if it were one market. It is not. And we keep seeing the insatiable appetite of the big AI Labs as if there was not limit : consumer, corporate, governments. No, they won’t take over all markets.

I believe that, following an evolutionary process (specialisation to best fit each environment), AI is splitting into at least 4 distinct tiers with different hardware requirements, different economics, different control structures, and different strategic consequences. That split will matter far more than the next model release.

The real question is no longer simply whether models will get smarter. They will. The real question is this: which intelligence becomes abundant, which remains scarce, and, more importantly, who controls each layer.

Once you look at AI that way, two things become clearer.

-

- The future is unlikely to be defined by one universal model serving everyone equally.

- Startup value will not accrue where most founders think it will.

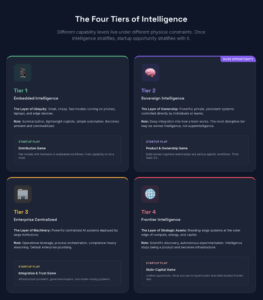

A four-tier architecture is emerging

The biggest driver/reason for the split is users need for privacy (e.g. anyone want to stop feeding OpenAI with your most strategic/personal data?) and the fact that open-source makes it possible.

The cleanest way to think about the AI landscape is not “open vs closed” or “local vs cloud,” but a four-tier architecture shaped by a harder constraint: the deployment envelope.

It’s a bit complex, let’s just say that this ‘deployment envelope’ is determined by some combination of compute intensity, memory footprint, memory bandwidth, latency tolerance, power consumption, autonomy depth, concurrency, and control regime. Those constraints matter because they determine where a system can run, how much it costs to operate, who can own it, and therefore which products and businesses can realistically be built on top of it.

In AI, physics is market structure in disguise.

A system that fits within mass-market hardware and can operate with acceptable latency spreads very widely. A more demanding system may require private workstations, managed enterprise infrastructure, or hyperscale compute. As capability rises, it is not only model quality that matters. It is whether the workload can be sustained economically, thermally, and operationally in a given environment.

This matters even more in an agentic world. Because with agents, AI workloads go BOOOM! 🔥

The real cost unit is no longer “cost per answer.” It is cost per workflow. A single agentic workflow can involve multiple model calls, tools, retries, verification loops, memory loading, and parallel sub-tasks. As the cost of inference collapses, more of those workflows become viable outside the hyperscalers. But the architecture does not flatten completely. The limiting factors shift toward concurrency, state, trust, and integration. Please bear with me.

Tier 1 — Embedded intelligence

The Layer of Ubiquity

This is the bottom layer: small, cheap, fast AI models running on phones (iphones!), laptops, edge devices, and inside everyday software.

These small systems (sub 70B parameters) will handle narrow tasks extremely well: summarization, lightweight copilots, classification, transcription, retrieval, simple automation, and privacy-sensitive on-device tasks. In an increasingly agentic world, this tier can also support lightweight local workflows: a few narrow agents, short-lived coordination loops, and simple automation close to the user.

It will be useful. It will be ubiquitous. It will also be increasingly commoditized. Tier 1 makes intelligence pervasive; it does not make it scarce.

- Startup Play: Distribution. Think Apple Store 2.0

- The Trap: Attractive for adoption, but dangerous for capture. Pure functionality here is rarely enough; it must be paired with hardware distribution or specialized edge environments. Expect economics to be razor thin.

Tier 2 — Sovereign personal and team intelligence

The Layer of Ownership – Where we see a gigantic opportunity for startups.

Tier 2 consists of powerful systems (100-500B parameters with multiple agent capabilites) that individuals, teams, and smaller organizations can control directly: totally private, persistent, personalized intelligence that adapts to users and remembers context over time.

This is the layer where intelligence stops being merely accessed and starts being owned in a meaningful operational sense. The most disruptive tier may not be superintelligence; it may be owned intelligence embedded in your context.

A private system that knows your history, workflow, and priorities may be economically more valuable to you than a remote system that is smarter in the abstract but lives on someone else’s servers and terms.

As inference becomes 1,000x to 10,000x cheaper, increasingly serious agentic workflows move into this layer: persistent assistants, research agents, coding agents, and team-level intelligence fabrics. We strongly believe that the practical case for sovereignty becomes unavoidable, rapidly. This will be mostly open source models hosted on private or sovereign clouds (in EU for Europeans) rather than on-premise.

Tier 2 is a product and ownership game. Tier 2 may create the most new product categories.

- Startup Play: Product and Ownership. Think SaaS 3.0

- The Opportunity: This is the richest startup layer. The most disruptive AI isn’t “superintelligence”—it is owned intelligence. A private system that lives on your terms is economically more valuable than a smarter remote system that lives on someone else’s servers. Build owned cognitive relationships, don’t just rent inference for SMEs and Corporates.

Tier 3 — Enterprise-scale centralized intelligence

The Layer of Institutional Machinery

Tier 3 is where large corporates and governments deploy powerful centralized AI systems (100-500B parameters with sustained agent-swarm -100’s – capabilites) that go well beyond personal intelligence but do not need to operate at the absolute frontier. This tier will be mostly addressed by the AI Labs and hyperscalers as well as a small cluster of well-funded outsiders (especially in EU) offering both closed and open source models.

Its role is operational leverage: process orchestration, large-scale optimization, compliance-heavy reasoning, and industrial planning. These systems need more power, concurrency, and governance than Tier 2 can sustain. In an agentic world, this is where one sees larger swarms and high-throughput systems operating across departments.

As costs fall, Tier 3 does not disappear; it expands. What was once carefully rationed usage becomes default enterprise plumbing. The key constraints shift to concurrency, state management, permissions, and reliability.

- Capabilities: Process orchestration, compliance-heavy reasoning, industrial planning, and large-scale optimization.

- The Logic: These systems require centralized infrastructure but don’t need to be at the absolute scientific frontier. They sit in the zone where cost, governance, and integration are justified by institutional budgets.

- Startup Play: Integration and Trust. Focus on vertical decision platforms and governance layers.

- The Challenge: This is not a game of viral growth; it is a game of trust, auditability, and deep workflow integration.

- The Opportunity: Infrastructure providers, governance layers, and model-routing systems.

Tier 4 — Frontier intelligence

The Layer of Strategic Assets

At the top sits the bleeding edge: the most advanced systems operating at the outer edge of compute, energy, capital, and scientific ambition. 1T+ parameter systems aiming at Artificial Super Intelligence (ASI).

This tier will not be casually democratized. It is shaped by extreme capital requirements, national interests, and institutional control. This is where intelligence is infrastructure beyond software. Or more bluntly: At the frontier, intelligence stops being a product and starts becoming a strategic asset (think about the OpenAI/Anthropic dealings with the US Dept of War).

Even with aggressive cost declines, Tier 4 remains distinct. While cheaper inference moves many workloads “down” into Tier 2 and 3, the frontier keeps moving. This is the realm of scientific discovery, autonomous experimentation, and strategic simulation.

- Startup Play: Infrastructure and State-Capital game.

- The Opportunity: Limited for most startups. Value accrues to hyperscalers and frontier labs.

[A caveat on the above framework: the analysis above assumes that over the next five years the effective cost of running AI workloads falls dramatically — on the order of 1,000x, and possibly as much as 10,000x in some parts of the stack. That decline may come from cheaper inference, better chips, lower power per unit of work, improved model efficiency, better routing, quantization, and more efficient agent design.

If that happens, the architecture does not disappear. But the boundaries shift outward. Workloads that feel expensive and centralized today move into private and enterprise-controlled environments tomorrow. What changes is not just cost. What changes is which tier a workload belongs to.]

What this means for startups

Different tiers do not just produce different capabilities; they produce different opportunity sets. That opportunity is not evenly distributed.

- Tier 1 offers scale, but weak scarcity.

- Tier 2 offers large, deep vertical markets where customers care about ownership, continuity, and offer the chance to build durable cognitive products.

- Tier 3 offers large budgets and real pain points above and beyond those of Tier 2, and requires deep institutional embedding.

- Tier 4 may shape the entire landscape, while leaving relatively little room for startups to capture value directly.

That alone should change how founders think about where to play.

A great many AI startups are still being built on the assumption that model capability itself is where durable value lives. That assumption is weak. Borrowed brilliance is not a moat. If the underlying intelligence is rented, improving rapidly, and increasingly substitutable, then the startup sitting on top is standing on borrowed land.

But the directional point is clear: when cognition becomes cheaper, the opportunity does not vanish — it relocates.

Some founders will build in layers where intelligence is already becoming interchangeable. Others will build in layers where ownership, integration, continuity, governance, trust, or execution remain hard to replicate.

We know where we think many of those opportunities sit. We will publish the full map at some point. If you have built your own view of where enduring value is most likely to concentrate, I would be very interested to compare notes. Please reach out.

What will likely get weaker:

The most vulnerable companies sit in the thinnest part of the stack: polished access to someone else’s model without real control over workflow, trust, memory, distribution, or execution. As base models improve, weak AI companies do not become stronger; they become more exposed.

The deeper point:

The most important decisions of the next decade may not be about model architecture alone. They will be about which capabilities live in which tier, who controls access to them, and which actors are allowed to build enduring businesses on top. Once intelligence is distributed across four

tiers, the distribution of power follows.

Conclusion

AI is not becoming one thing. It is splitting into layers.

- Tier 1 will make basic intelligence ubiquitous.

- Tier 2 will make higher intelligence ownable by SMEs and small corporates.

- Tier 3 will industrialize higher intelligence inside large institutions.

- Tier 4 will concentrate frontier capability (super intelligence) where capital, compute, and strategic power converge.

The companies that mistake rented capability for durable advantage will struggle.

The companies that understand where scarcity migrates when intelligence becomes abundant will have a chance to matter.